What Is Drip Feed Indexing? A Complete 2026 Guide

A study of over one million URLs found that 40% of new web pages fail to be indexed by Google within their first month. For backlinks — particularly those on lower-authority pages with thin crawl budgets — the number is higher still.

Drip feed indexing is one of the most practical responses to this problem. It doesn't change the links you've built or the pages they live on. It changes when those links are submitted — and that timing makes a meaningful difference to whether Google processes them or ignores them.

If you're familiar with the mechanics of how drip feed works, our drip feed indexing page covers the core process, the link type schedule table, and how to set up a campaign. This guide goes deeper: the distinction between drip feed indexing and drip feed link building, how to choose your schedule based on campaign volume, and what to do when a campaign finishes and results are mixed.

Drip feed link building and drip feed indexing are not the same thing

This distinction matters more than it might seem, because confusing the two leads to using the wrong approach for the wrong problem.

Drip feed link building means acquiring new backlinks gradually over time — getting one link per week from different sources rather than building fifty links in a single day. The goal is to keep your link velocity within a range that looks natural to Google's algorithms.

Drip feed indexing means something different. Your links already exist. You've already built them. Drip feed indexing is about how you submit those existing links to an indexing service — in daily batches over a period of days or weeks, rather than all at once.

The problem being solved is also different:

- Drip feed link building addresses link velocity — the rate at which new links appear pointing to your site

- Drip feed indexing addresses crawl budget and submission signals — the pattern in which indexing requests reach Google's crawl queue

You can do one without the other. You can build links slowly and still submit them all to an indexer on the same day. You can also build links quickly and then drip feed the submissions. They're separate decisions about separate stages of the process.

Most guides online use these terms interchangeably. That creates confusion when someone is trying to fix an indexing problem — they end up applying link building advice to an indexing question and wonder why nothing changes.

How to choose your drip feed schedule

Most advice on drip feed scheduling says something like "go slowly" and leaves it there. That isn't useful when you're sitting in front of a submission form deciding between a 7-day and a 21-day schedule.

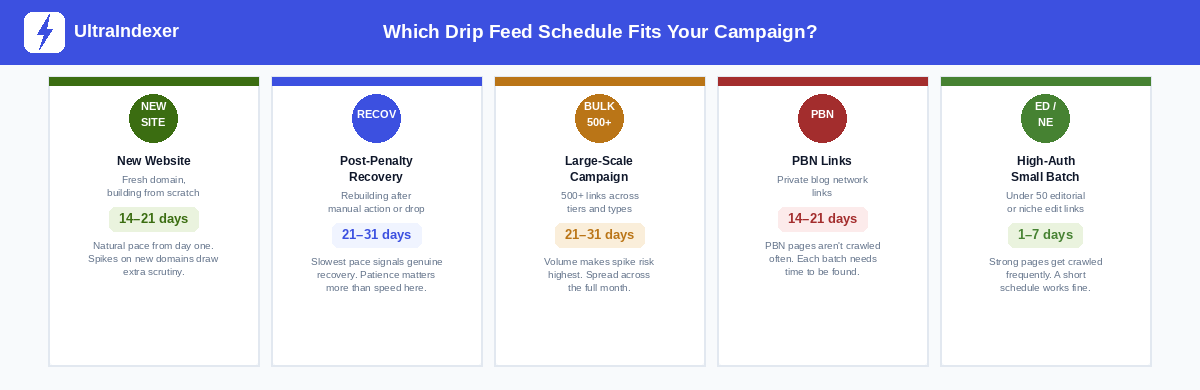

The right schedule depends on two things: the volume of links you're submitting, and the authority level of the pages they live on. Higher volume and lower authority both push toward a longer schedule.

These are guidelines, not rules. Your specific campaign may warrant adjustments based on link quality, the domain authority of the linking pages, and how time-sensitive the campaign is. But the logic behind the table holds:

Volume increases risk. Submitting 500 links in a short window creates a larger signal spike than submitting 50. The same daily submission rate that looks reasonable for a 50-link campaign looks aggressive for a 500-link one.

Authority reduces risk. High-authority editorial links live on pages that Googlebot already crawls frequently. A submission spike there is less unusual because the page already receives regular crawl activity. A web 2.0 property that gets visited once a month has no buffer — a sudden submission burst stands out against a flat crawl history.

The links-per-day number matters more than the total days. A 500-link campaign on a 7-day schedule means roughly 71 links per day. The same campaign on a 21-day schedule means roughly 24 per day. The daily rate is what Googlebot's systems actually see — not the total volume.

One mistake that defeats the purpose of drip feed entirely

It seems obvious once you see it, but it's one of the most common errors in drip feed campaigns.

Setting a 1-day drip schedule on a large batch of links is functionally identical to bulk submission.

If you have 500 links and choose a 1-day schedule, all 500 go in on day one. You've used a drip feed service to achieve exactly the same outcome as clicking "submit all." The crawl spike is the same. The velocity signal is the same. The risk profile is the same.

Drip feed only does its job when the daily submission rate is low enough to look natural relative to the linking pages' crawl history. A web 2.0 property that normally gets one Googlebot visit per week doesn't benefit from receiving 500 indexing signals in a single day — regardless of whether those signals were sent through a drip feed service or a bulk submission tool.

The minimum meaningful schedule for a large campaign depends on the link types involved. For a 500-link batch of web 2.0s and tier-2 links, a 21-day schedule puts roughly 24 submissions per day through the pipeline — a rate that sits within what a moderately active campaign would produce naturally.

Which campaign type needs which schedule

Beyond volume and link authority, the context of your campaign shapes the right approach. Here are the five situations that come up most often.

New websites and fresh domains have no crawl history for Googlebot to compare against. There's no established baseline of link activity. A slow drip builds that baseline from the start — which is exactly what a genuinely growing site looks like.

Post-penalty recovery is the situation where patience matters most. If a site has received a manual action or was hit by an algorithm update related to its link profile, the last thing it needs is a burst of new indexing signals. A 21–31 day schedule signals organic, measured growth — the opposite of the patterns that triggered scrutiny in the first place.

Large-scale campaigns with 500 or more links represent the highest spike risk. The volume alone makes a longer schedule necessary. Spreading 1,000 links across 31 days keeps the daily rate at roughly 32 submissions — manageable and unremarkable.

PBN links sit on pages that Googlebot visits infrequently. Their crawl budget is limited and their activity history is thin. A faster schedule relative to that baseline looks noisier than it would on a high-traffic editorial site. Give each batch time to be discovered before the next one arrives.

Small high-authority batches — under 50 editorial links or niche edits on established pages — are the one case where a short schedule or even instant submission is genuinely fine. These pages are crawled frequently. A new indexing signal on an already-active page raises no flags.

How to know if a drip feed campaign is working

This is the question every guide skips, and it's a practical one. You've set a 14-day campaign. It's day 7. What should you be seeing?

At the midpoint, partial indexing is normal. If roughly half your early-batch links have indexed by the campaign's midpoint, that's a reasonable signal. The second half of your submissions hasn't gone in yet — some indexing of early batches while later batches are still processing is expected and healthy.

Zero indexing at the midpoint is a warning sign. If none of your links have indexed halfway through a campaign, the issue is likely on the linking page side, not the submission side. Check whether those pages are indexed themselves. A link on an unindexed page cannot be processed regardless of how it was submitted.

Don't triage mid-campaign. Wait for the final report. Your per-URL report is the real measurement — it shows exactly which links indexed and which didn't, with timestamps. Acting on partial data mid-campaign leads to double submissions and confused reporting.

What a good campaign result looks like. There is no universal indexing rate to aim for — it depends entirely on link type and page quality. Niche edits on established pages should index at a higher rate than web 2.0 properties. If your niche edit campaign shows a low result, that tells you something about the quality of the placements. If your web 2.0 campaign shows a similar result, that may simply be the nature of those links. Compare like for like.

What to do with non-indexed links after the report:

- Check whether the linking page is indexed. Run

site:[linking-page-url]in Google. If the page isn't indexed, the link has no path to being processed — no matter how many times you submit it. - Confirm the link is still live. Content gets updated and pages get restructured. Verify the URL still resolves, the page loads, and your link is still present in the content.

- Use a bulk index checker before resubmitting. Some links may have indexed after the report was generated. Check current status on all non-indexed URLs before resubmitting — you don't want to spend credits on links that are already indexed.

- Resubmit with a longer timeline. If the first campaign used a 7-day spread, try 14–21 days for the resubmission. The pages clearly need more time between signals.

- Accept a small proportion won't index. Some backlinks on low-quality pages will not index regardless of how many times they're submitted. Write them off and focus effort on the recoverable ones.

Running multiple drip feed campaigns as an agency

Managing a single campaign is straightforward. Managing five simultaneous campaigns for different clients requires a bit more structure.

Stagger your start dates. If you launch all campaigns on the same day, all your reports arrive at the same time. That creates a review bottleneck. Stagger campaign starts by two to three days so reports come in spread across a week rather than simultaneously.

Keep link types separated by campaign. Mixing tier-1 editorial links with web 2.0s in a single campaign makes the report harder to interpret and forces you to choose one submission pace that won't be right for either type. A campaign of 50 editorial links should run on a different timeline from a campaign of 200 tier-2 links. Separate them from submission through to reporting.

Report indexing rate alongside link count. Clients understand "we built 100 links this month." They understand it even better when you show them "we built 100 links — 78 are confirmed indexed, 14 are in a second submission pass, and 8 are on pages that aren't being crawled." That level of transparency is professional and it's what most agencies aren't providing.

Set indexing rate targets per campaign type. Once you've run a few campaigns, you'll build a picture of what a normal result looks like for each link type you use. Guest posts from your outreach network might consistently index at a certain rate. Web 2.0 properties from a particular vendor might land lower. Track these numbers over time — they tell you where to spend more and where to stop.

Build the review step into your delivery timeline. The post-report review and resubmission pass isn't optional — it's where a significant proportion of the value in a campaign is recovered. Factor three to five days of post-report work into your client delivery calendar so resubmissions don't get skipped under deadline pressure.

What drip feed indexing does not fix

Drip feed indexing solves the submission timing problem. It doesn't solve anything else. It's worth being specific about this, because the limits of the technique are as important as the benefits.

It doesn't fix links on unindexed pages. If the page your link lives on isn't indexed by Google, no submission approach — drip feed or otherwise — will get that link processed. The page has to enter Google's index first. That's a problem with the linking domain, not with your submission.

It doesn't improve the quality of weak links. A link on a thin, low-traffic page with no external references is a weak link. A well-timed drip feed submission may get it indexed, but indexing doesn't add authority it doesn't have. The ranking power a link passes is determined by the quality of the linking page, not by how it was submitted.

It doesn't guarantee a ranking improvement. Getting a link indexed means Google has processed it. Whether that processed link improves your rankings depends on your overall link profile, the competitiveness of your target keywords, the relevance of the linking page, and a long list of other factors that indexing doesn't touch.

It doesn't speed up crawling on demand. Drip feed indexing sends signals to Googlebot — it doesn't control when Googlebot responds. On a low-authority page with a limited crawl budget, even a well-timed submission may take days to be picked up. Drip feed improves the probability of that pickup happening; it doesn't guarantee the timing.

Understanding these limits makes the technique more useful, not less. You use drip feed indexing to solve the specific problem it solves — submission timing and crawl signal distribution — and you address the other problems (page quality, link relevance, domain authority) through different means.

Frequently asked questions

What is the difference between drip feed indexing and drip feed link building?

Drip feed link building means acquiring new backlinks gradually over time to maintain a natural link velocity. Drip feed indexing means submitting already-built backlinks to an indexing service in daily batches rather than all at once. They address different problems at different stages of the SEO process and can be used independently of each other.

How many links should I submit per day in a drip feed campaign?

There's no single right answer — it depends on your link types and the authority of the linking pages. As a general guide: under 50 links total can run at 7–50 per day on a short schedule; 150–500 links should run at 15–30 per day over 14–21 days; 500+ links work best at 16–24 per day over a 21–31 day schedule. The table in this article gives the full breakdown by volume range.

Can I use drip feed indexing for both tier-1 and tier-2 links?

Yes, but run them as separate campaigns with different schedules. Tier-1 editorial links on high-authority pages can use a shorter schedule — 1–7 days. Tier-2 links, web 2.0s, and lower-authority placements need more time — 14–31 days depending on volume. Mixing them in one campaign makes it harder to read the results and forces you to choose a single schedule that may not be right for either type.

What should I do if a large proportion of my links didn't index after a drip feed campaign?

Start by checking whether the linking pages themselves are indexed — that's the most common cause of poor indexing rates that isn't related to submission method. Then check whether the links are still live. For confirmed non-indexed links on indexed pages, resubmit as a fresh campaign with a longer schedule than the first attempt. For links on unindexed pages, either ask the publisher to get their page indexed first, or accept the link won't contribute until that changes.

Does drip feed indexing guarantee my links will rank?

No. Drip feed indexing improves the proportion of your links that get indexed — it addresses the gap between a link being built and a link being processed by Google. Whether an indexed link improves rankings depends on the link's quality, relevance, anchor text, the competitiveness of your target keywords, and many other factors that indexing doesn't control. Getting a link indexed is a prerequisite for it working — not a guarantee that it will.

If you want to run a drip feed campaign, UltraIndexer's drip feed indexing lets you set any schedule from 1 to 31 days. Credits never expire. Your per-URL report is ready 7 days after your final batch completes.

After your campaign, use the bulk index checker to confirm which links are indexed and which need resubmission — up to 5,000 URLs per check.